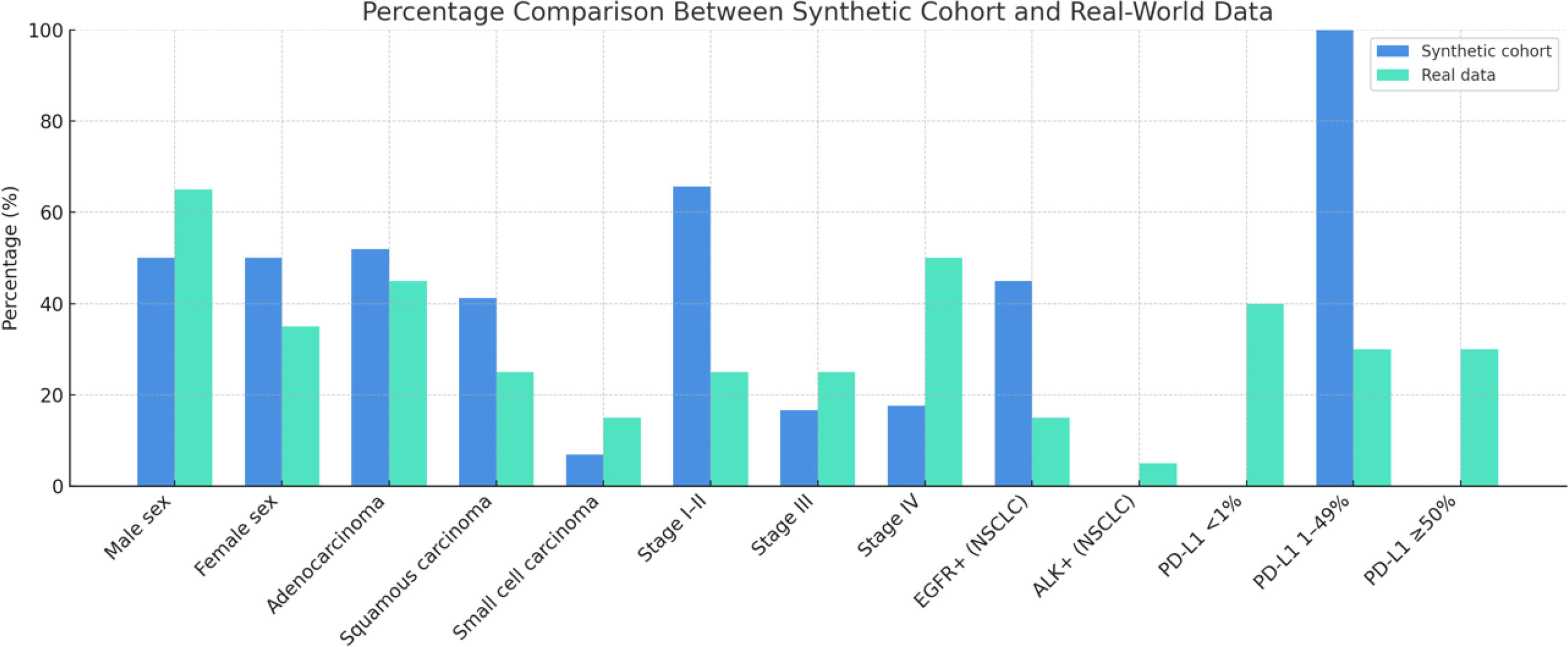

Large language models (LLMs) are increasingly used in medicine for clinical reasoning and educational simulation. This study assessed the epidemiological plausibility of a synthetic lung-cancer cohort generated by ChatGPT-4.0. A total of 102 virtual cases were created in Spanish using structured prompts including demographic, histologic, and molecular variables. When descriptively compared with international datasets (GLOBOCAN 2020, SEER, and biomarker meta-analyses), the cohort reproduced general disease patterns but showed statistically significant deviations (p<0.05): early-stage disease and EGFR-positive tumors were overrepresented, while advanced stages, ALK rearrangements, and extreme PD-L1 values were underrepresented. These discrepancies likely reflect biases in model training data and the probabilistic nature of generative language models. Despite this quantified generative bias, the utility of these cohorts for non-epidemiological tasks like educational simulation is discussed, provided methodological transparency is maintained.